UVM has been a massive success. There's no doubt about that. For the first time it showed the chip industry the benefits of having a common framework. You can hire directly for UVM skills. Vendors provide UVM models for interfacing their IP. There are tools for generating UVM registers and other boilerplate code. There is training available and forums for asking UVM-related questions.

But it wasn't always like that. I remember when UVM was still not widely adopted. A lot of companies said "Weeeell, I'm sure this UVM thing is very good for other companies, but you know, our needs are a bit special". It's funny how all those special needs just suddenly disappeared when the economic benefits of not having to deal with your own framework and being able to easily hire people and get VIP from vendors became apparent.

So UVM has been a massive success. It has become so ubiquitous so that many people in the industry seem to believe it has some magical properties and that it's the only way to verify chips. But, frankly speaking, it's not really that good of a framework. It's clunky and suffer from a lot of legacy. Many companies I'm talking with don't actually use it as is but have written some custom framework on top, and you can find plenty of tools to generate UVM, which in the end means we end up with a boatload of incompatible framework generators instead. But the biggest issue is that it's written in SystemVerilog.

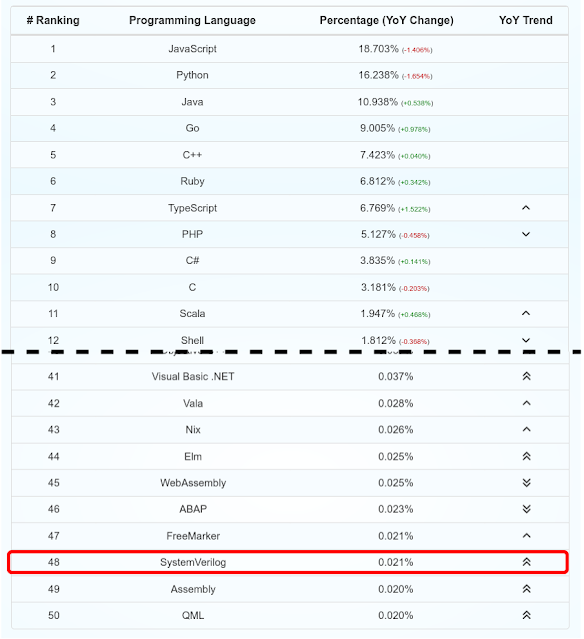

Oh no! Will this turn in to one of those language wars again? Maybe, but we can't ignore the fact that there probably goes 1000 Javascript, Python or Java developers for each Verilog coder. (System)Verilog (or VHDL for that matter) barely scrapes the bottom of the top 50 most popular language lists. "Nonsense!", I hear my fellow chip design engineers mumble, "Everyone knows Verilog". Well, there's a word for that. Survivor bias. Everyone in the semiconductor industry knows Verilog because those who couldn't stand the language just went elsewhere. And this is a huge problem for the industry. On top of an aging demographic we have issues keeping the youngsters interested when there's other fancier languanges and environments out there.

|

| Github Language Stats (https://madnight.github.io/githut) |

"But! But!...", you argue, "...you need Verilog to work with chip design". I won't argue that in practice this is true to some degree because there's a whole lot of verilog out there, but in theory Verilog doesn't say absolutely anything about how chips work. It's just a programming language, which original intended purpose was to describe chip behavior. Remember, Verilog wasn't ever meant to be used to implement chips, which is a fact that tends to get forgotten many times. As another example of this, look at Erlang, which was created to program telephone switches. This means neither that Erlang can't be used for other things, nor that Erlang is the only way to program telephone switches.

"Still.. ", I hear from the back of the room, "..can't they just learn SystemVerilog? It's like C++, sort of". That misses a large part of what makes a language successful. True, it's a C-like syntax to some extent (mixed up with Java and a hodge-podge of 90's language ideas), but you don't have access to your toolbox of C++ tools like linters, debugger, syntax highlighters, IDEs, sanitizers and everything else that makes you productive. While the chip designers might think SystemVerilog is the best option because it has the largest ecosystem in this domain, this ecosystem is a drop in the ocean compared to popular languages.

And I'm 100% certain that in many cases, although it's beneficial, you don't need to know a single thing about how chips work and you can still do a great job of verifying the functionality of some IP core. Let's turn things around for a while. I have spent the past ten years developing military radar, software defined radios, automotive radar, digital cameras, weather radar to name a few things. And I have absolutely no clue about how microwaves work and I'm a lousy photographer, but I still can do a good job because at the abstraction where I work I don't need to understand all these things. But if I also would be required to learn, let's say Fortran, because that's what was traditionally used for math heavy applications. well, then I would probably start looking for jobs elsewhere. And the same goes for verification engineers. Give them a spec, tell them what to do and a familiar programming languages and they'll probably do just fine. I definitely think it's preferable to have someone who does know how chips work on the team, but it doesn't have to be all of them.

So let's assume then that we can have verification engineers who don't have to know verilog. What does that mean? Well, it means that our pool of potential candidates has grown by a 1000 times. I can tell you for sure that it will be a lot easier to find good verification engineers than finding good vericication engineers who also happen to know Verilog. And if you have ever experienced how hard it is to get good verification engineers, then this is something you will greatly appreciate. And it's not just the number of developers that's growing. The whole flourishing ecosystem of libraries, forums and examples around popular languages like Python makes the Verilog ecosystem look like a wasteland and this means you can reuse much more existing code and involve your software friends in better ways.

So what's the solution then? We need to enable software developers to create and verify chips. In this article we will be looking at the verification side, but I suggest looking at companies like ChipFlow to see what's happening on the design side as well. And Python is a good bet right now. It might be Go or Rust or something completely different in a couple of years, but right now Python is widely used already as a glue language in chip development environments, like perl was used 10-20 years ago. And we see more and more EDA tools growing Python bindings. I'm not sure the latter is a purely positive thing, but we'll see.

And when it comes to Python and verification I have said many times by now that I believe cocotb will be one of the important technologies in the coming years. It is a mature technology that is already adopted by large and small companies and you can find ads for companies looking to hire for this skill. And just like we saw with UVM, being able to hire for a certain skill without having to train them for your home-built verification framework is a time and money saver. Another thing that speaks for cocotb is that it uses your regular RTL simulators. This means it poses no threat to the EDA vendors. It just enhances their offering and they can continue to sell licenses for their tools. And with cocotb being a project governed by FOSSi Foundation, we clearly see how much more interested the EDA vendors are in collaborating on cocotb compared to many other free and open source silicon projects. Of course, it also works with your favorite open source simulators like Icarus, ghdl or Verilator and this means the proprietary EDA vendors need to compete with the open source tooling on equal terms, where they need to flex the strengths of their tooling rather than the artificial lock-in created because none of the open source tools have any UVM support to speak of.

So, to sum things up. UVM has been a massive success and has seen industry-wide adoption over the past ten years. But the most important thing UVM did was probably to show the industry the benefit of having a common framework, not being the best framework in itself. My prediction is that UVM will see a slow (everything in EDA is slow) decline in the coming years and it will be relevant for long time, but gradually be replaced by frameworks written in more common languages and that it's a good bet to get to know cocotb specifically a little better. So I think it's time to consider whether UVM sparks joy. Otherwise, it's time to thank it and say good bye.

Now, if the industry could just agree on a common format for describing IP cores and interfacing EDA tools. Oh well. That's another battle for another day.

No comments:

Post a Comment